2025

Graph Sparsification Via Mixture of Graphs

Guibin Zhang†, Xiangguo Sun†, Yanwei Yue†, Chonghe Jiang, Kun Wang, Tianlong Chen, Shirui Pan († equal contribution)

International Conference on Learning Representations (ICLR) 2025 Spotlight

We introduce Mixture-of-Graphs (MoG), leveraging the concept of Mixtureof-Experts (MoE), to dynamically select tailored pruning solutions for each node. Specifically, MoG incorporates multiple sparsifier experts, each characterized by unique sparsity levels and pruning criteria, and selects the appropriate experts for each node. Subsequently, MoG performs a mixture of the sparse graphs produced by different experts on the Grassmann manifold to derive an optimal sparse graph.

Cut the crap: An economical communication pipeline for llm-based multi-agent systems

Guibin Zhang†, Yanwei Yue†, Zixun Li†, Sukwon Yun, Guancheng Wan, Kun Wang*, Dawei Chen, Jeffrey Xu Yu, Tianlong Chen († equal contribution, * corresponding author)

International Conference on Learning Representations (ICLR) 2025 Poster

We propose an economical, simple, and robust multi-agent communication framework, termed AgentPrune, which can seamlessly integrate into mainstream multi-agent systems and prunes redundant or even malicious communication messages. Technically, AgentPrune is the first to identify and formally define the communication redundancy issue present in current LLM-based multi-agent pipelines, and efficiently performs one-shot pruning on the spatialtemporal message-passing graph, yielding a token-economic and high-performing communication topology.

2024

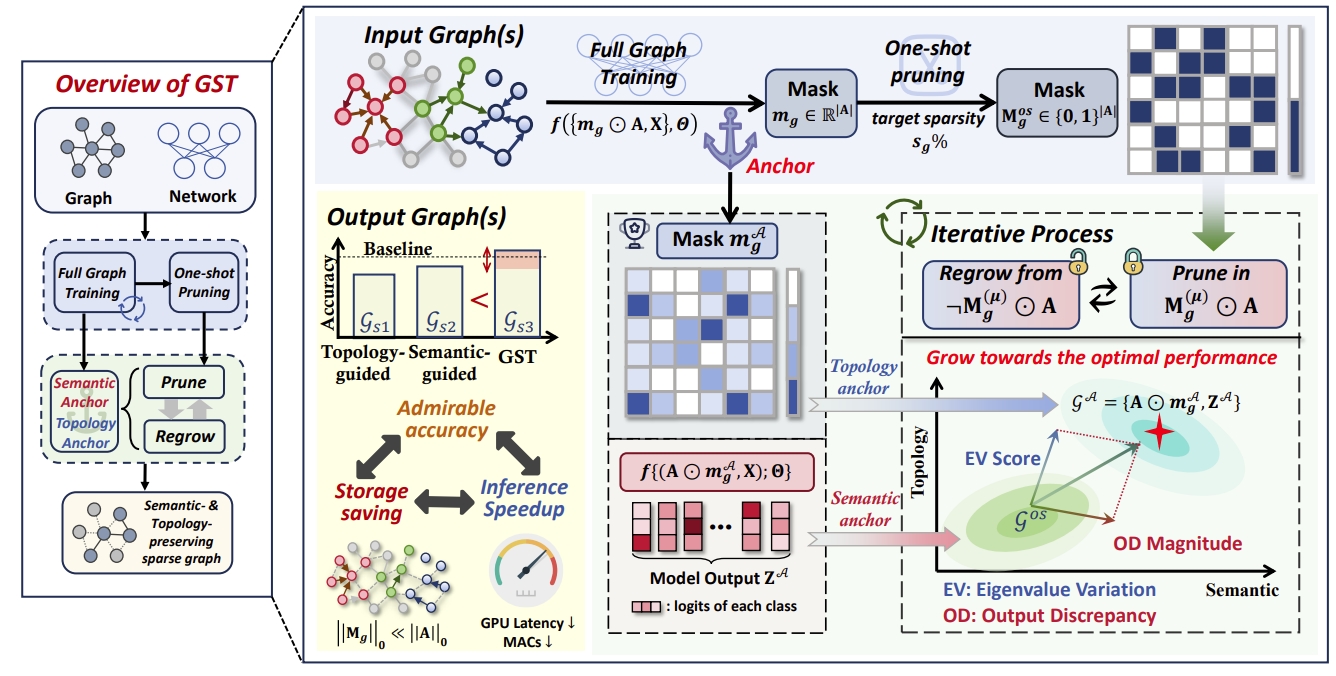

Fast Track to Winning Tickets: Repowering One-Shot Pruning for Graph Neural Networks

Yanwei Yue†, Guibin Zhang†, Haoran Yang, Dawei Cheng* († equal contribution, * corresponding author)

Association for the Advancement of Artificial Intelligence(AAAI) 2025 Poster

We propose a pruning fast track based on one-shot, which prunes the graph to the target sparsity at one time, and then gradually optimizes the edge mask.Our framework achieves a double-win situation of graph lottery tickets with higher sparsity and faster speed.

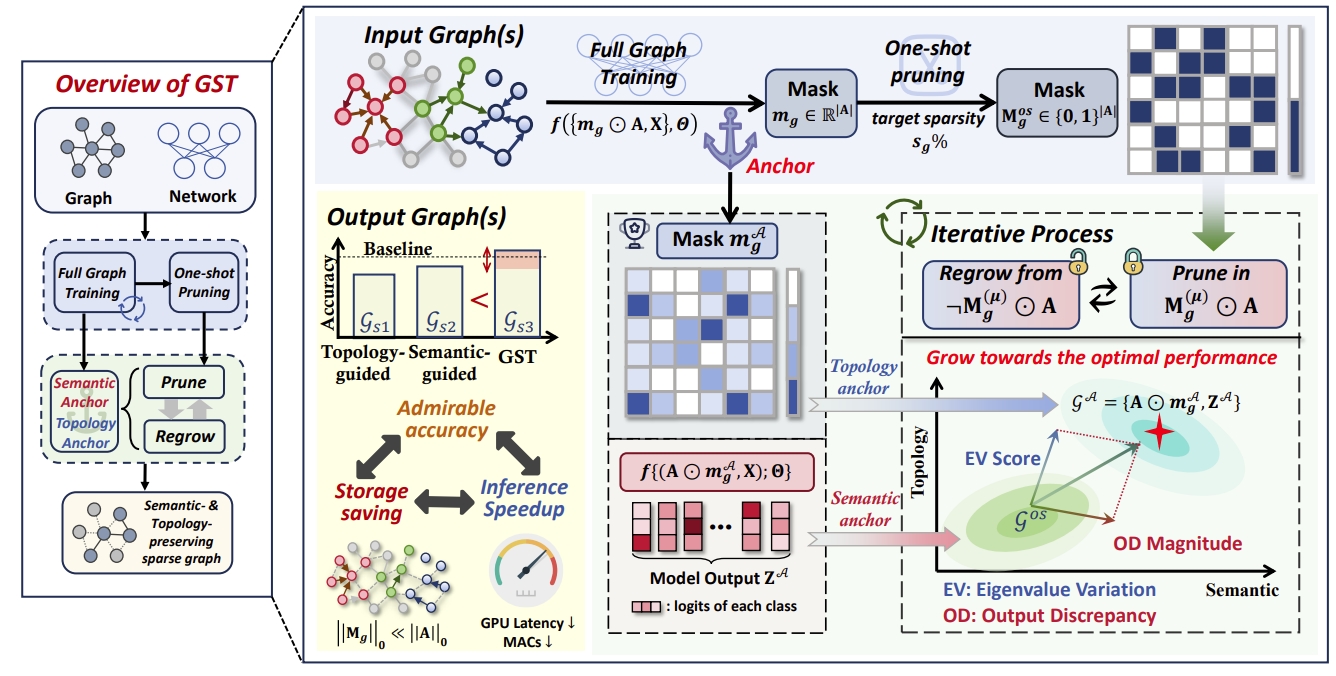

Two Heads Are Better Than One: Boosting Graph Sparse Training via Semantic and Topological Awareness

Guibin Zhang†, Yanwei Yue†, Kun Wang*, Junfeng Fang, Yongduo Sui, Kai Wang, Yuxuan Liang, Dawei Cheng, Shirui Pan, Tianlong Chen* († equal contribution, * corresponding author)

International Conference on Machine Learning (ICML) 2024 Poster

We combine topology-guided pruning and semantic-based pruning, conduct limited training on the original graph to build reliable anchor.And then dynamic sparse training is performed using anchor as semantic and topological benchmarks to obtain better sparse graphs.

Graph Lottery Ticket Automated

Guibin Zhang†, Kun Wang†, Wei Huang, Yanwei Yue, Yang Wang, Roger Zimmermann, Aojun Zhou, Dawei Cheng, Jin Zeng*, Yuxuan Liang* († equal contribution, * corresponding author)

International Conference on Learning Representations (ICLR) 2024 Poster

We train the edge learner in the global perspective and learn row-wise thresholds in the node perspective for pruning through trainable edge weights and threshold vectors.It implements an Adaptive,Dynamic,Automatic process to find winning tickets.